We use cookies to improve your experience on our site.

AI Vulnerability Database

Open database of failures in general-purpose AI systems

Mission

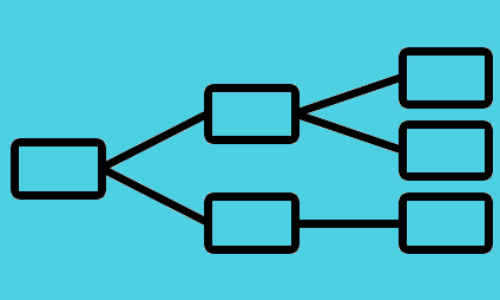

AI Vulnerability Database (AVID) is an open-source knowledge base of failure modes for general-purpose AI (GPAI) systems, including open-weight generative models, closed-API systems, developer tools, and end-to-end AI applications and agents. The goals of AVID are to- Maintain a high-fidelity database of vulnerabilities and reports with evidence, metadata, and reproducible evaluation details

- Support builders evaluating GPAI systems across model, tool, and application layers

- Map failures to a library of taxonomies, with the AVID taxonomy as one of several reference schemes

What We Do

Our primary focus is the Database of evaluation examples with structured, reproducible failure evidence. We pair this with a Taxonomy Library: a growing set of frameworks used to classify records, where AVID taxonomy is one of many.We also periodically release blog posts covering ongoing trends in AI risk management.