Taxonomy Library

AVID’s primary focus is the database of GPAI failure evidence, and taxonomies are used to classify and risk-score those records. We maintain a library of taxonomies, including the native AVID taxonomy, that help in this purpose.

AVID Taxonomy

The AVID taxonomy serves as a common foundation for AI engineering, product, and policy teams to manage potential risks across stages of developing and operating GPAI systems. In spirit, this taxonomy is analogous to MITRE ATT&CK for cybersecurity vulnerabilities, and MITRE ATLAS for adversarial attacks on ML systems.

At a high level, the AVID taxonomy consists of two views, intended to facilitate the work of two different user personas.

- Effect view: for the auditor persona that aims to assess risks for a GPAI system and its components.

- Lifecycle view: for the developer persona that aims to build an end-to-end GPAI system while being cognizant of potential risks.

Based on case-specific needs, people involved with building a GPAI system may need to operate as either of the above personas.

Effect (SEP) view

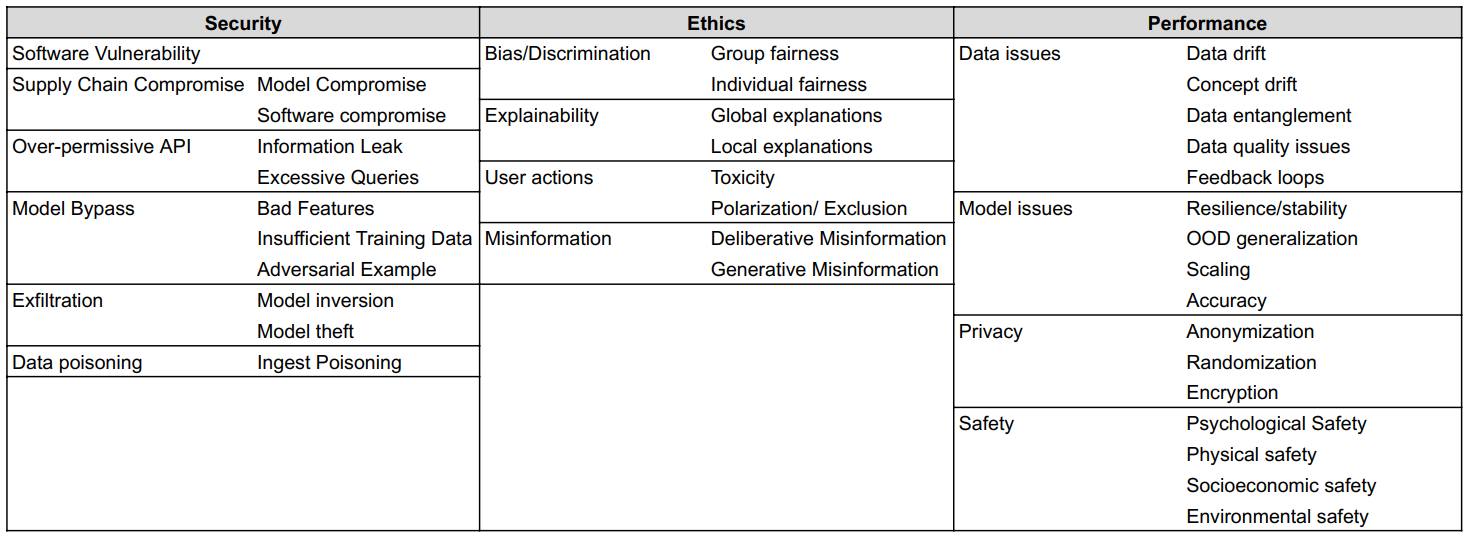

The domains, categories, and subcategories in this view provide a ‘risk surface’ for the AI artifact being evaluated, whether dataset, model, or whole system. This view contains three top-level domains:

Each domain is divided into a number of categories and subcategories, each of which is assigned a unique identifier. Figure 1 presents a holistic view of this AVID taxonomy matrix. See the individual pages for Security, Ethics, Performance for more details.

|

|---|

| Figure 1. The AVID Taxonomy Matrix. |

Lifecycle view

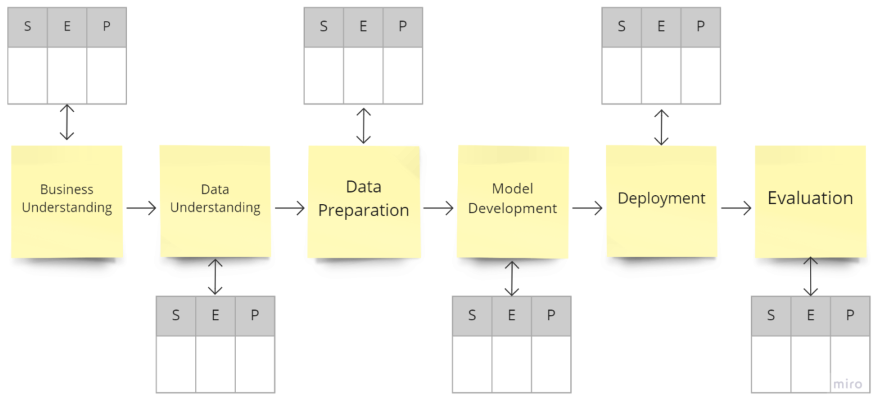

The stages in this view represent high-level sequential steps of a typical ML workflow. Following the widely-used Cross-industry standard process for data mining (CRISP-DM) framework, we designate six stages in this view.

| ID | Stage |

|---|---|

| L01 | Business Understanding |

| L02 | Data Understanding |

| L03 | Data Preparation |

| L04 | Model Development |

| L05 | Evaluation |

| L06 | Deployment |

Figure 2 reconciles the two different views of the AVID taxonomy. We conceptually represent the potential space of risks in three dimensions, consisting of the risk domain—S, E, or P—a specific vuln pertains to; the (sub)category within a chosen domain; and the development lifecycle stage of a vuln. The SEP and lifecycle views are simply two different sections of this three-dimensional space.

|

|---|

| Figure 2. SEP and Lifecycle views of the AVID taxonomy represent different sections of the space of potential risks in an AI development workflow. |

Auxiliary Taxonomies

For machine-readability, taxonomies are shared using the standardized MISP format. This enables support for additional taxonomies in the AVID taxonomy library. A list of such auxiliary taxonomies maintained in avid-schema/taxonomy: